MCP Core Concepts

Everything you need to understand MCP from scratch — its architecture, message types, transports.

What is MCP and why should you care?

If you’ve been building software for any meaningful amount of time, you’ve watched the industry cycle through integration standards — SOAP, REST, GraphQL, gRPC. Each one solved a real problem: how do two systems talk to each other in a structured, predictable way?

The Model Context Protocol (MCP) is the latest entry in that lineage, but it solves a fundamentally different problem. MCP isn’t about how your frontend talks to your backend. It’s about how AI models talk to tools, data sources, and external systems.

Think about it this way: when you use an AI assistant like Claude, it’s incredibly good at reasoning and generating text. But it’s trapped inside its own context window. It can’t check your calendar, query your database, search the web, or trigger a deployment pipeline — unless someone gives it a structured way to do those things. That structured way is MCP.

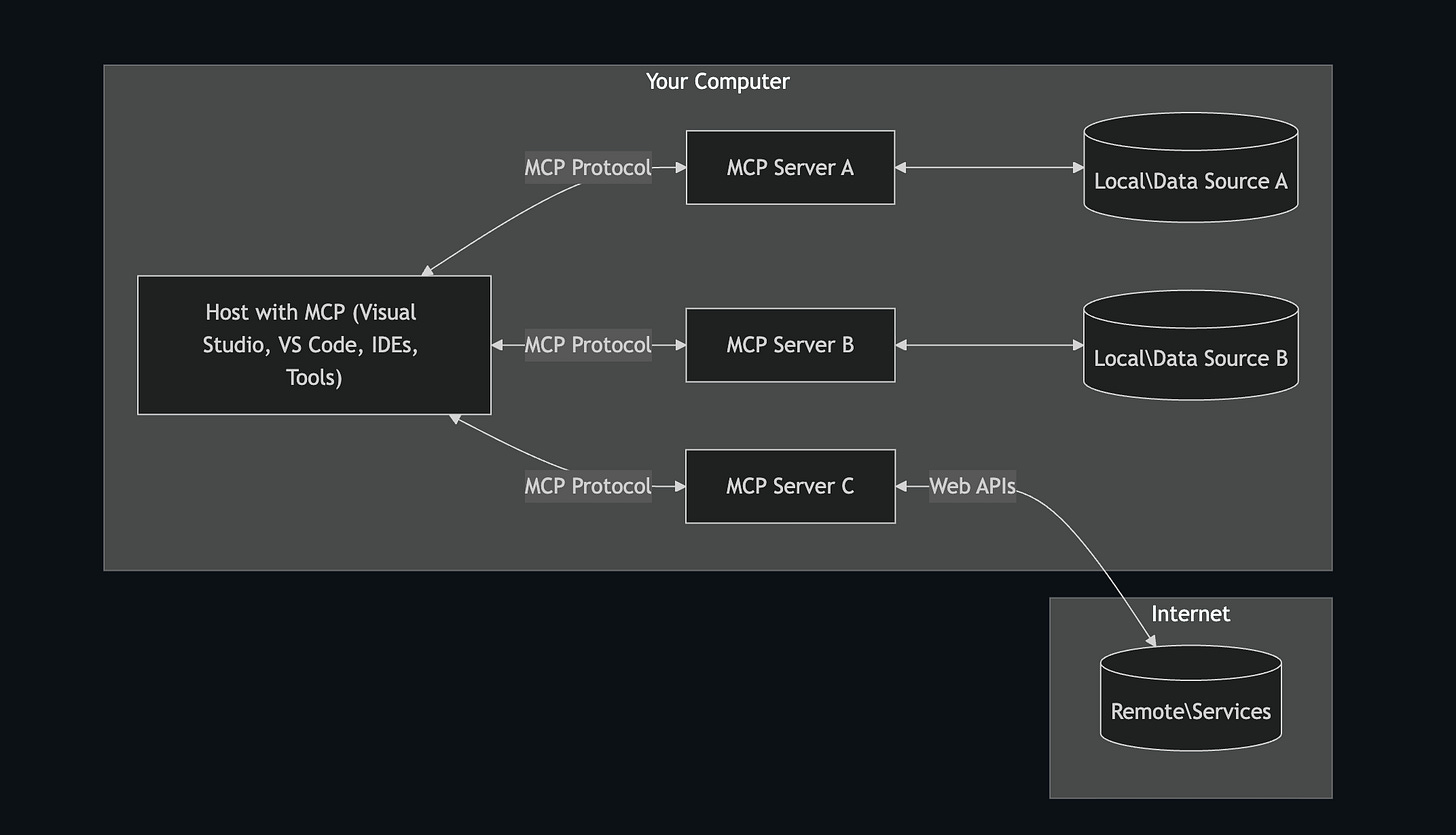

MCP is an open protocol — originally developed by Anthropic, but designed to be vendor-neutral and community-driven. It defines a standard way for AI models (the “client” side) to discover, negotiate with, and invoke capabilities exposed by external systems (the “server” side).

The problem MCP solves

Before MCP, every AI tool integration was bespoke. If you wanted Claude to interact with GitHub, you’d build a custom plugin. If you wanted it to query Postgres, you’d build another one. Each integration had its own message format, its own authentication story, its own error handling. It was like the pre-REST era — a mess of point-to-point integrations that didn’t compose.

The consequences were painful:

For tool builders, you had to write separate integrations for every AI provider — one for OpenAI’s function calling format, another for Claude’s tool use format, another for Gemini. The same tool, reimplemented three times.

For AI application developers, you couldn’t mix and match. Switching AI providers meant rewriting all your tool integrations. You were locked in — not by the model itself, but by the plumbing around it.

For enterprises, the security story was a nightmare. Each custom integration had its own trust model. There was no standardized way to enforce consent, rate limiting, or data flow controls across all AI-tool interactions.

MCP solves all three of these by establishing a single, open protocol that any client and any server can implement. Build your tool once as an MCP server, and it works with every MCP-compatible AI client. Switch your AI provider, and all your tools keep working. Enforce your security policies in one place, and they apply to every integration.

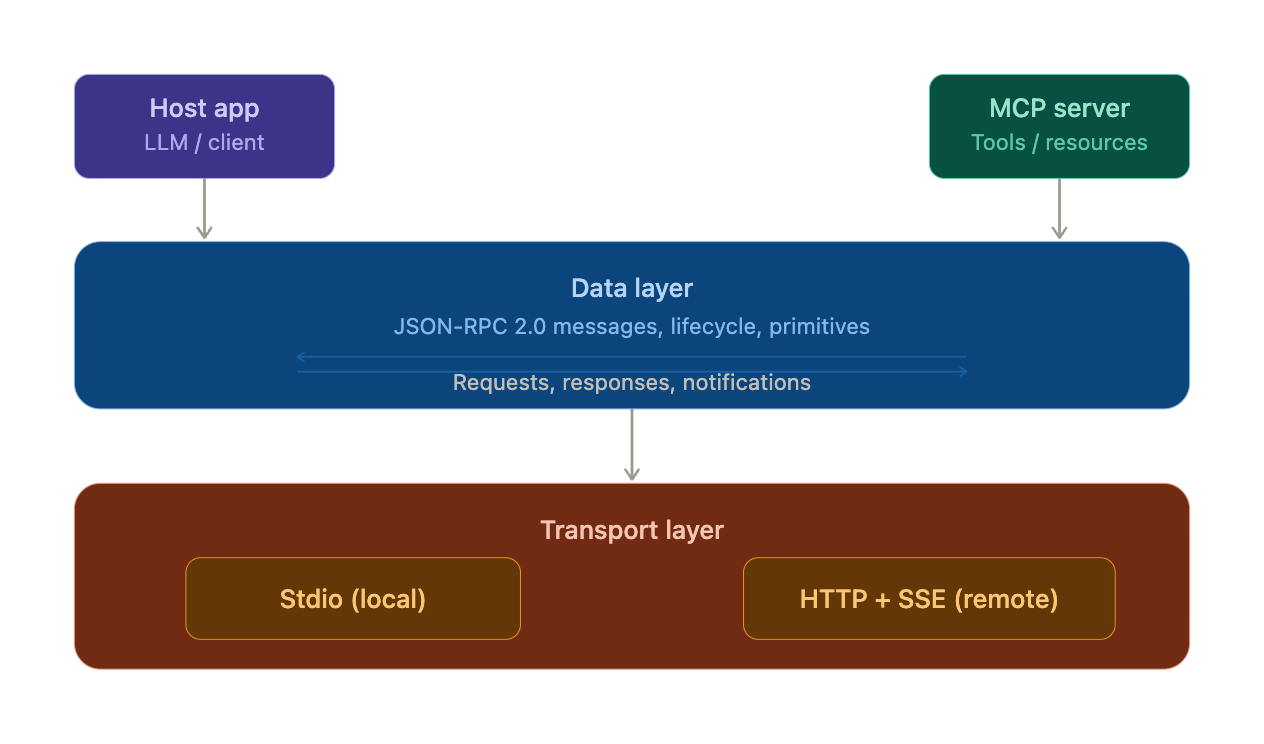

The two-layer architecture

MCP's architecture is elegantly simple. The entire protocol is split into exactly two layers, each with a clearly defined responsibility. If you've ever worked with the OSI model or even just the separation between ASP.NET's middleware pipeline (how requests are processed) and Kestrel (how bytes move over the wire), this pattern will feel immediately familiar.

The Data Layer — what to say

The Data Layer is the brain of the protocol. It defines what clients and servers say to each other — the structure of messages, the semantics of each operation, and the rules governing the conversation lifecycle.

It’s built on top of JSON-RPC 2.0, which is a lightweight remote procedure call protocol that uses JSON as its data format. If you’ve never encountered JSON-RPC before, think of it as “HTTP endpoints, but instead of URLs and verbs, you have a method string and params object.”

The Data Layer handles three core responsibilities: defining the message structure (request, response, notification), managing the connection lifecycle (initialization, capability negotiation, shutdown), and exposing protocol primitives (tools, resources, and prompts — the things an MCP server can offer).

The Transport Layer — how to deliver it

The Transport Layer is the postal service. It doesn’t care about what’s inside the envelope — it just makes sure the envelope gets from point A to point B reliably.

MCP supports two transport mechanisms:

Stdio (Standard I/O) is used for local communication. The MCP client spawns the MCP server as a child process and they talk through stdin/stdout pipes. This is fast, requires zero network configuration, and is the default for local development tools. I

Streamable HTTP with Server-Sent Events (SSE) is used for remote communication. The client sends messages to the server via HTTP POST requests, and the server can push real-time updates back via SSE. This is the transport you’d use when your MCP server is running on a different machine — say, as a cloud service.

As a engineer who’s spent years building APIs and distributed systems, MCP feels like a natural evolution. It takes patterns we already know — request/response messaging, service discovery, middleware pipelines — and adapts them for the AI agent era. The protocol is intentionally simple: JSON-RPC 2.0 messages flowing over either local stdio or remote HTTP, with a clean lifecycle of initialize → discover → execute.